Part 2: TrueSkill Demystified

The Xbox matchmaking algorithm, applied to jewelry

Quick History: Elo and Its Problems

TrueSkill came from Elo,[1] the chess rating system from the 1960s. Elo's idea: give everyone a number, update it when they play, let the numbers converge to true skill over time.

Simple, but it has problems:

- New players are wild cards: A fresh account could be a grandmaster or a total beginner. Elo has no way to express this uncertainty.

- No confidence tracking: Someone rated 1500 after 3 games is very different from 1500 after 1000 games. Elo treats them the same.

- Draws are awkward: The original system wasn't really designed for ties.

In 2007, Microsoft Research built TrueSkill[2] to fix this for Xbox Live. It powers Halo and Gears of War matchmaking.

Turns out it works great for ranking pendants too.

Two Numbers, Not One

The key difference from Elo: TrueSkill tracks two things about each item.

| Symbol | What it is | What it means |

|---|---|---|

| Mean | Best guess at how much you like this | |

| Standard deviation | How uncertain we are about that guess |

New pendant enters the system? We start with:

μ = 25.0 # Middle of the range

σ = 8.333 # No idea if you'll love it or hate itThat high is the system saying "could be anywhere."

Figure 1: A new pendant enters the system with high uncertainty. The Gaussian prior is centered at with , representing our lack of prior knowledge about user preference.

Every Comparison Shrinks Uncertainty

Here's the neat part. When two pendants compete, both get more certain.

You pick A over B. What did we learn?

- A is probably better than we thought

- B is probably worse than we thought

- And we're more confident about both now, regardless of who won

Figure 2: A single comparison updates both pendants. The preferred item's increases while the other's decreases—and crucially, both values shrink.

One comparison: values diverge, values shrink.[3] That's Bayesian inference doing its thing.

The Code is Simple

ratingL, ratingR = create_ratings(pendantL, pendantR)

if user_chose_left:

new_ratingL, new_ratingR = trueskill.rate_1vs1(ratingL, ratingR)

else:

new_ratingR, new_ratingL = trueskill.rate_1vs1(ratingR, ratingL)rate_1vs1() handles all the Gaussian math. Factor graphs, message passing, belief propagation[2:1]—you don't have to think about it.

The Lucky Winner Problem

What if a pendant wins once by accident and looks great?

Pendant X: compared once, won. Now , .

Pendant Y: compared 20 times, won 15. Now , .

If we rank by alone, Y beats X. Good.

But what if X just got lucky? With , its true appeal could easily be much lower.

The fix: conservative score.

This is roughly a 99.7% confidence lower bound. Pessimistic, but stable.

Figure 3: The conservative score penalizes uncertainty. Pendant Y, with lower , outranks the "lucky" Pendant X despite X having fewer comparisons.

The conservative score penalizes uncertainty. You have to prove you're good through multiple comparisons—one win doesn't cut it.

Think of as: "the appeal level this item is almost certainly at least as good as." Safe for leaderboards.

Surprises Get Bigger Updates

Not all comparisons teach us the same amount. Expected outcomes? Small adjustments. Surprises? Big updates.

Makes sense: if the pendant with beats the one with , that just confirms what we already thought. Small tweak.

But if the underdog wins? Now that's information:

- Underdog's jumps up (you like it more than we thought)

- Favorite's drops (not as appealing as we believed)

- Both values shrink (definitive preference = strong evidence)

This means TrueSkill learns faster from surprising choices. New pendant beats several established favorites? The algorithm catches on fast.

Draws Work Too

Sometimes you genuinely can't decide. That's fine—TrueSkill handles draws.

A draw means the items are close in appeal. This:

- Pulls values toward each other (if they were different)

- Shrinks for both (we still learned something)

The draw_probability parameter (0.10 in our setup) tells TrueSkill how common draws are, which affects how much info a draw conveys.

The Full Flow

Here's what happens during a comparison:

Figure 4: The complete flow from user decision to database persistence. "Skip" doesn't update ratings—it's for pairs where neither option is acceptable.

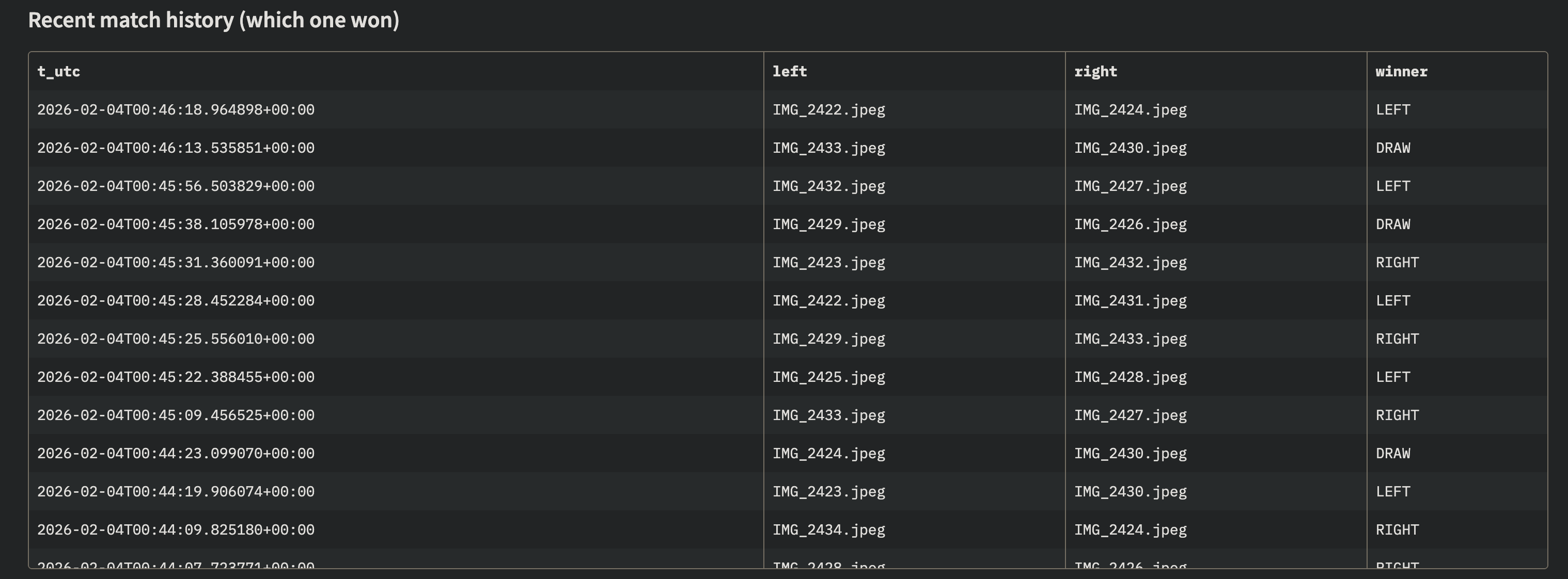

The Match History

Every comparison creates a record:

Figure 5: The recent matches panel shows comparison history with outcomes (Left/Right/Draw/Skip) and timestamps.

After a few dozen comparisons, you can see patterns forming.

This raises some questions:

- How do we rank with noisy preferences? (Conservative score handles this)

- Which pairs should we show next? (Not yet solved)

- How do we avoid showing the same pair twice? (Not yet solved)

- What if we keep cycling the same top 2 items? (Loop hell!)

TrueSkill solves #1. But picking which pairs to compare? That's a separate problem—and it's critical.

Part 3 tackles that.

Summary

TrueSkill gives us:

| Feature | Why It Matters |

|---|---|

| Two numbers (, ) | Track appeal and confidence together |

| Bayesian updates | Learn from every comparison |

| Shrinking | Uncertainty drops with evidence |

| Conservative score | Don't trust lucky first impressions |

| Draw handling | "Can't decide" is still useful data |

But we've been glossing over something: which pairs should we compare?

With 237 pendants and limited patience, random comparisons won't cut it. We need to be smart about which pairs will teach us the most.

That's active learning. And that's Part 3.

References

Next up: Part 3 - The Pair Selection Puzzle

Previous: Part 1 - The Pendant Problem

Elo, A. E. (1978). The Rating of Chessplayers, Past and Present. Arco Publishing. ↩︎

Herbrich, R., Minka, T., & Graepel, T. (2007). "TrueSkill™: A Bayesian Skill Rating System." Advances in Neural Information Processing Systems, 19. Microsoft Research. ↩︎ ↩︎

The conservative score is related to UCB (upper confidence bound) methods in bandit problems—except here we want the lower bound for stable rankings. ↩︎